Researchers at healthcare cybersecurity company Cynerio just published a report about five cybersecurity holes they found in a hospital robot system called TUG.

TUGs are pretty much robot cabinets or platforms on wheels, apparently capable of carrying up to 600kg and rolling along at just under 3km/hr (a slow walk).

They’re apparently available in both hospital variants (e.g. for transporting medicines in locked drawers on ward rounds) and hospitality variants (e.g. conveying crockery and crumpets to the conservatory).

During what we’re assuming was a combined penetration test/security assessment job, the Cynerio researchers were able to sniff out traffic to and from the robots in use, track the network exchanges back to a web portal running on the hospital network, and from there to uncover five non-trivial security flaws in the backend web servers used to control the hospital’s robot underlords.

In a media-savvy and how-we-wish-people-wouldn’t-do-this-but-they-do PR gesture, the researchers dubbed their bugs The JekyllBot Five, dramatically stylised JekyllBot:5 for short.

Despite the unhinged, psychokiller overtones of the name “Jekyllbot”, however, the bugs don’t have anything to do with AI gone amuck or a robot revolution.

The researchers also duly noted in their report that, at the hospital where they were investigating with permission, the robot control portal was not directly visible from the internet, so a would-be attacker would have already needed an internal foothold to abuse any of the bugs they found.

Unauthenticated access to everything

Nevertheless, the fact that the hospital’s own network was shielded from the internet was just as well.

With TCP access to the server running the web portal, the researchers claim that they could:

- Access and alter the system’s user database. They were apparently able to modify the rights given to existing users, to add new users, and even to assign users administrative privileges.

- Snoop on trivially-hashed user passwords. With a username to add to a web request, they could recover a straight, one-loop, unsalted MD5 hash of that users’ password. In other words, with a precomputed list of common password hashes, or an MD5 rainbow table, many existing passwords could easily be cracked.

- Send robot control commands. According to the researchers, TCP-level access to the robot control server was enough to issue unauthenticated commands to currently active robots. These commands included opening drawers in the robot’s cabinet (e.g. where medications are supposedly secured), cancelling existing commands, recovering the robot’s location and altering its speed.

- Take photos with a robot. The researchers showed sample images snapped and recovered (with authorisation) from active robots, including pictures of a corridor, the inside of an elevator (lift), and a shot from a robot approaching its charging station.

- Inject malicious JavaScript into legitimate users’ browsers. The researchers found that the robot management console portal was vulnerable to various types of cross-site scripting (XSS) attack, which could allow malware to be foisted on legitimate users of the system.

XSS revisited

Cross-site scripting is where website X can be tricked into serving up HTML content for display that, when loaded into the visitor’s browser, is actually interpreted as JavaScript code and executed instead.

This typically happens when a web server tries to display some text, such as a robot ID or ward name, but that text itself contains HTML control tags that get passed through unaltered.

Imagine, for example, that a server wanted to display a ward name, but the name were stored not as NORTH WARD, but as <script>...</script>.

The server would need to take great care not to pass through the <script> tag directly, because that character sequence tells the browser, “What comes next is a JavaScript program; execute it with all the privileges any script offically stored on the server would have.”

Instead, the server would need to recognise the “dangerous” HTML tag delimiter < (less-than sign), and convert it to the safe-for-display code <, which means, “Actually display a less-than sign, don’t treat it as a magic tag marker.”

Attackers can, and do, use XSS bugs to trick even well-informed users – the sort of users who routinely check the URLs in their address bar and who avoid using links or attachments they weren’t expecting – into automatically running malicious script code under the apparently safe umbrella of a server they assume they can trust.

What to do?

- Divide and conquer. A firewall alone is not enough to protect you from cyberattacks, not least because cybercriminals may have a foothold inside your network already, meaning that their ultimate attack doesn’t originate from outside. But that’s no reason to expose all of your network to anyone who wants to have a poke about. In this case, the fact that the robot portal was shielded from the internet gave the hospital some breathing space to react to the researchers’ report while the vendor worked on the responsibly-disclosed bugs.

- Never rely on obscurity for security. You aren’t obliged to reveal every detail of your network arrangements, and you aren’t obliged to make your internal network visible to the world. Relying on “no one noticing” how your robot control process works is not enough. In this report, the researchers quickly found their way to the insecure web server simply by recording what happened when a robot got to an elevator. (In another infamous case, a casino was breached due to an obscure but insecure networked electronic pump in the aquarium in the lobby.)

- Always use HTTPS. One aspect of the report that the researchers themselves didn’t go into is that all the (redacted) interactions they showed between robots and the seb backend referred to

http://URLs, and used TCP ports often associated with unencrypted traffic (80, 8080, 8081). Withouthttps://, we assume that a manipulator-in-the-middle (MiTM) attack would have been possible even in the absence of these bugs, making it feasible to snoop on robot locations, image uploads and control messages, and even to manipulate them in transit, without being stopped or detected. Always use TLS (transport layer security) to put the S-for-security inhttps://. - React quickly to bug reports. These bugs were responsibly disclosed (technically, this means they aren’t really zero-day bugs, despite how the researchers describe them) and the vendor apparently came out with patches both to its server code and its robot firmware in the agreed time before the researchers went public. This is one reason for setting responsible disclosure deadlines – a “secrecy period” is usually agreed to give vendors enough time to get protection out for everyone who wants it before the world gets told about the exploits, but the agreed period is not so long that don’t-care vendors can simply put off patching indefinitely.

- Store passwords securely. Use a recognised salt-hash-stretch technique so that passwords can be verified within a reasonable time when a user formally logs on, but so that if the password verification hashes get leaked or stolen, crackers can’t simply try out billions of likely passwords an hour until they get lucky and figure out which input will give an MD5 hash of

eeeefdf77f1ac2eb5cdb7cf82ad48b9a. (Try it: that one took us under a second to crack.) - Validate thine inputs and thine outputs. If you’re reading in data that you plan to insert into a web page that is sent back with the imprimatur of your own servers, make sure you aren’t blindly copying or echoing back characters that have a potentially dangerous meaning when they reach the other end. Notably, generated website HTML content shouldn’t be allowed to include any text strings that control how a web page gets processed, such as tags and other HTML “magic” codes.

Although the researchers behind the name JekyllBot seem to have indulged themselves with dramatic examples of how these bugs might be used to wreak low-speed/high-torque robotic havoc in a hospital corridor, for example by describing robots “crashing into staff, visitors and equipment”, and attackers “wreak[ing] havoc and destruction at hospitals using the robots”…

…they also make the point that these bugs could result in the more pedestrian-sounding but no less dangerous side effect of helping attackers implant malware on the computers of unsuspecting internal users.

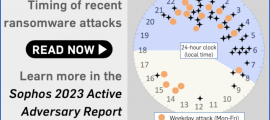

And healthcare malware attacks, very sadly, often turn out to involve ransomware, which typically ends up derailing a lot more than just the hospital’s autonomous delivery robots.